Lately, I’ve been seeing more and more posts on social media asking for testing suggestions for students who exhibit subtle language-based difficulties. Many of these children are typically referred for initial assessments or reassessments as part of advocate/attorney involved cases, while others are being assessed due to the parental insistence that something “is not quite right” with their language and literacy abilities, even in the presence of “good grades.” Continue reading Comprehensive Assessment of Elementary Aged Children with Subtle Language and Literacy Deficits

Lately, I’ve been seeing more and more posts on social media asking for testing suggestions for students who exhibit subtle language-based difficulties. Many of these children are typically referred for initial assessments or reassessments as part of advocate/attorney involved cases, while others are being assessed due to the parental insistence that something “is not quite right” with their language and literacy abilities, even in the presence of “good grades.” Continue reading Comprehensive Assessment of Elementary Aged Children with Subtle Language and Literacy Deficits

Category: Processing Disorders

Neuropsychological or Language/Literacy: Which Assessment is Right for My Child?

![]() Several years ago I began blogging on the subject of independent assessments in speech pathology. First, I wrote a post entitled “Special Education Disputes and Comprehensive Language Testing: What Parents, Attorneys, and Advocates Need to Know“, in which I used 4 different scenarios to illustrate the importance of comprehensive language evaluations for children with subtle language and learning needs. Then I wrote about: “What Makes an Independent Speech-Language-Literacy Evaluation a GOOD Evaluation?” in order to elucidate on what actually constitutes a good independent comprehensive assessment. Continue reading Neuropsychological or Language/Literacy: Which Assessment is Right for My Child?

Several years ago I began blogging on the subject of independent assessments in speech pathology. First, I wrote a post entitled “Special Education Disputes and Comprehensive Language Testing: What Parents, Attorneys, and Advocates Need to Know“, in which I used 4 different scenarios to illustrate the importance of comprehensive language evaluations for children with subtle language and learning needs. Then I wrote about: “What Makes an Independent Speech-Language-Literacy Evaluation a GOOD Evaluation?” in order to elucidate on what actually constitutes a good independent comprehensive assessment. Continue reading Neuropsychological or Language/Literacy: Which Assessment is Right for My Child?

Have I Got This Right? Developing Self-Questioning to Improve Metacognitive and Metalinguistic Skills

Many of my students with Developmental Language Disorders (DLD) lack insight and have poorly developed metalinguistic (the ability to think about and discuss language) and metacognitive (think about and reflect upon own thinking) skills. This, of course, creates a significant challenge for them in both social and academic settings. Not only do they have a poorly developed inner dialogue for critical thinking purposes but they also because they present with significant self-monitoring and self-correcting challenges during speaking and reading tasks. Continue reading Have I Got This Right? Developing Self-Questioning to Improve Metacognitive and Metalinguistic Skills

Many of my students with Developmental Language Disorders (DLD) lack insight and have poorly developed metalinguistic (the ability to think about and discuss language) and metacognitive (think about and reflect upon own thinking) skills. This, of course, creates a significant challenge for them in both social and academic settings. Not only do they have a poorly developed inner dialogue for critical thinking purposes but they also because they present with significant self-monitoring and self-correcting challenges during speaking and reading tasks. Continue reading Have I Got This Right? Developing Self-Questioning to Improve Metacognitive and Metalinguistic Skills

It’s All Due to …Language: How Subtle Symptoms Can Cause Serious Academic Deficits

Scenario: Len is a 7-2-year-old, 2nd-grade student who struggles with reading and writing in the classroom. He is very bright and has a high average IQ, yet when he is speaking he frequently can’t get his point across to others due to excessive linguistic reformulations and word-finding difficulties. The problem is that Len passed all the typical educational and language testing with flying colors, receiving average scores across the board on various tests including the Woodcock-Johnson Fourth Edition (WJ-IV) and the Clinical Evaluation of Language Fundamentals-5 (CELF-5). Stranger still is the fact that he aced Comprehensive Test of Phonological Processing, Second Edition (CTOPP-2), with flying colors, so he is not even eligible for a “dyslexia” diagnosis. Len is clearly struggling in the classroom with coherently expressing self, telling stories, understanding what he is reading, as well as putting his thoughts on paper. His parents have compiled impressively huge folders containing examples of his struggles. Yet because of his performance on the basic standardized assessment batteries, Len does not qualify for any functional assistance in the school setting, despite being virtually functionally illiterate in second grade.

Scenario: Len is a 7-2-year-old, 2nd-grade student who struggles with reading and writing in the classroom. He is very bright and has a high average IQ, yet when he is speaking he frequently can’t get his point across to others due to excessive linguistic reformulations and word-finding difficulties. The problem is that Len passed all the typical educational and language testing with flying colors, receiving average scores across the board on various tests including the Woodcock-Johnson Fourth Edition (WJ-IV) and the Clinical Evaluation of Language Fundamentals-5 (CELF-5). Stranger still is the fact that he aced Comprehensive Test of Phonological Processing, Second Edition (CTOPP-2), with flying colors, so he is not even eligible for a “dyslexia” diagnosis. Len is clearly struggling in the classroom with coherently expressing self, telling stories, understanding what he is reading, as well as putting his thoughts on paper. His parents have compiled impressively huge folders containing examples of his struggles. Yet because of his performance on the basic standardized assessment batteries, Len does not qualify for any functional assistance in the school setting, despite being virtually functionally illiterate in second grade.

The truth is that Len is quite a familiar figure to many SLPs, who at one time or another have encountered such a student and asked for guidance regarding the appropriate accommodations and services for him on various SLP-geared social media forums. But what makes Len such an enigma, one may inquire? Surely if the child had tangible deficits, wouldn’t standardized testing at least partially reveal them?

Well, it all depends really, on what type of testing was administered to Len in the first place. A few years ago I wrote a post entitled: “What Research Shows About the Functional Relevance of Standardized Language Tests“. What researchers found is that there is a “lack of a correlation between frequency of test use and test accuracy, measured both in terms of sensitivity/specificity and mean difference scores” (Betz et al, 2012, 141). Furthermore, they also found that the most frequently used tests were the comprehensive assessments including the Clinical Evaluation of Language Fundamentals and the Preschool Language Scale as well as one-word vocabulary tests such as the Peabody Picture Vocabulary Test”. Most damaging finding was the fact that: “frequently SLPs did not follow up the comprehensive standardized testing with domain-specific assessments (critical thinking, social communication, etc.) but instead used the vocabulary testing as a second measure”.(Betz et al, 2012, 140)

In other words, many SLPs only use the tests at hand rather than the RIGHT tests aimed at identifying the student’s specific deficits. But the problem doesn’t actually stop there. Due to the variation in psychometric properties of various tests, many children with language impairment are overlooked by standardized tests by receiving scores within the average range or not receiving low enough scores to qualify for services.

Thus, “the clinical consequence is that a child who truly has a language impairment has a roughly equal chance of being correctly or incorrectly identified, depending on the test that he or she is given.” Furthermore, “even if a child is diagnosed accurately as language impaired at one point in time, future diagnoses may lead to the false perception that the child has recovered, depending on the test(s) that he or she has been given (Spaulding, Plante & Farinella, 2006, 69).”

There’s of course yet another factor affecting our hypothetical client and that is his relatively young age. This is especially evident with many educational and language testing for children in the 5-7 age group. Because the bar is set so low, concept-wise for these age-groups, many children with moderate language and literacy deficits can pass these tests with flying colors, only to be flagged by them literally two years later and be identified with deficits, far too late in the game. Coupled with the fact that many SLPs do not utilize non-standardized measures to supplement their assessments, Len is in a pretty serious predicament.

But what if there was a do-over? What could we do differently for Len to rectify this situation? For starters, we need to pay careful attention to his deficits profile in order to choose appropriate tests to evaluate his areas of needs. The above can be accomplished via a number of ways. The SLP can interview Len’s teacher and his caregiver/s in order to obtain a summary of his pressing deficits. Depending on the extent of the reported deficits the SLP can also provide them with a referral checklist to mark off the most significant areas of need.

In Len’s case, we already have a pretty good idea regarding what’s going on. We know that he passed basic language and educational testing, so in the words of Dr. Geraldine Wallach, we need to keep “peeling the onion” via the administration of more sensitive tests to tap into Len’s reported areas of deficits which include: word-retrieval, narrative production, as well as reading and writing.

For that purpose, Len is a good candidate for the administration of the Test of Integrated Language and Literacy (TILLS), which was developed to identify language and literacy disorders, has good psychometric properties, and contains subtests for assessment of relevant skills such as reading fluency, reading comprehension, phonological awareness, spelling, as well as writing in school-age children.

For that purpose, Len is a good candidate for the administration of the Test of Integrated Language and Literacy (TILLS), which was developed to identify language and literacy disorders, has good psychometric properties, and contains subtests for assessment of relevant skills such as reading fluency, reading comprehension, phonological awareness, spelling, as well as writing in school-age children.

Given Len’s reported history of narrative production deficits, Len is also a good candidate for the administration of the Social Language Development Test Elementary (SLDTE). Here’s why. Research indicates that narrative weaknesses significantly correlate with social communication deficits (Norbury, Gemmell & Paul, 2014). As such, it’s not just children with Autism Spectrum Disorders who present with impaired narrative abilities. Many children with developmental language impairment (DLD) (#devlangdis) can present with significant narrative deficits affecting their social and academic functioning, which means that their social communication abilities need to be tested to confirm/rule out presence of these difficulties.

Given Len’s reported history of narrative production deficits, Len is also a good candidate for the administration of the Social Language Development Test Elementary (SLDTE). Here’s why. Research indicates that narrative weaknesses significantly correlate with social communication deficits (Norbury, Gemmell & Paul, 2014). As such, it’s not just children with Autism Spectrum Disorders who present with impaired narrative abilities. Many children with developmental language impairment (DLD) (#devlangdis) can present with significant narrative deficits affecting their social and academic functioning, which means that their social communication abilities need to be tested to confirm/rule out presence of these difficulties.

However, standardized tests are not enough, since even the best-standardized tests have significant limitations. As such, several non-standardized assessments in the areas of narrative production, reading, and writing, may be recommended for Len to meaningfully supplement his testing.

Let’s begin with an informal narrative assessment which provides detailed information regarding microstructural and macrostructural aspects of storytelling as well as child’s thought processes and socio-emotional functioning. My nonstandardized narrative assessments are based on the book elicitation recommendations from the SALT website. For 2nd graders, I use the book by Helen Lester entitled Pookins Gets Her Way. I first read the story to the child, then cover up the words and ask the child to retell the story based on pictures. I read the story first because: “the model narrative presents the events, plot structure, and words that the narrator is to retell, which allows more reliable scoring than a generated story that can go in many directions” (Allen et al, 2012, p. 207).

As the child is retelling his story I digitally record him using the Voice Memos application on my iPhone, for a later transcription and thorough analysis. During storytelling, I only use the prompts: ‘What else can you tell me?’ and ‘Can you tell me more?’ to elicit additional information. I try not to prompt the child excessively since I am interested in cataloging all of his narrative-based deficits. After I transcribe the sample, I analyze it and make sure that I include the transcription and a detailed write-up in the body of my report, so parents and professionals can see and understand the nature of the child’s errors/weaknesses.

Now we are ready to move on to a brief nonstandardized reading assessment. For this purpose, I often use the books from the Continental Press series entitled: Reading for Comprehension, which contains books for grades 1-8. After I confirm with either the parent or the child’s teacher that the selected passage is reflective of the complexity of work presented in the classroom for his grade level, I ask the child to read the text. As the child is reading, I calculate the correct number of words he reads per minute as well as what type of errors the child is exhibiting during reading. Then I ask the child to state the main idea of the text, summarize its key points as well as define select text embedded vocabulary words and answer a few, verbally presented reading comprehension questions. After that, I provide the child with accompanying 5 multiple choice question worksheet and ask the child to complete it. I analyze my results in order to determine whether I have accurately captured the child’s reading profile.

Now we are ready to move on to a brief nonstandardized reading assessment. For this purpose, I often use the books from the Continental Press series entitled: Reading for Comprehension, which contains books for grades 1-8. After I confirm with either the parent or the child’s teacher that the selected passage is reflective of the complexity of work presented in the classroom for his grade level, I ask the child to read the text. As the child is reading, I calculate the correct number of words he reads per minute as well as what type of errors the child is exhibiting during reading. Then I ask the child to state the main idea of the text, summarize its key points as well as define select text embedded vocabulary words and answer a few, verbally presented reading comprehension questions. After that, I provide the child with accompanying 5 multiple choice question worksheet and ask the child to complete it. I analyze my results in order to determine whether I have accurately captured the child’s reading profile.

Finally, if any additional information is needed, I administer a nonstandardized writing assessment, which I base on the Common Core State Standards for 2nd grade. For this task, I provide a student with a writing prompt common for second grade and give him a period of 15-20 minutes to generate a writing sample. I then analyze the writing sample with respect to contextual conventions (punctuation, capitalization, grammar, and syntax) as well as story composition (overall coherence and cohesion of the written sample).

The above relatively short assessment battery (2 standardized tests and 3 informal assessment tasks) which takes approximately 2-2.5 hours to administer, allows me to create a comprehensive profile of the child’s language and literacy strengths and needs. It also allows me to generate targeted goals in order to begin effective and meaningful remediation of the child’s deficits.

Children like Len will, unfortunately, remain unidentified unless they are administered more sensitive tasks to better understand their subtle pattern of deficits. Consequently, to ensure that they do not fall through the cracks of our educational system due to misguided overreliance on a limited number of standardized assessments, it is very important that professionals select the right assessments, rather than the assessments at hand, in order to accurately determine the child’s areas of needs.

References:

- Allen, M, Ukrainetz, T & Carswell, A (2012) The narrative language performance of three types of at-risk first-grade readers. Language, Speech, and Hearing Services in Schools, 43(2), 205-221.

- Betz et al. (2013) Factors Influencing the Selection of Standardized Tests for the Diagnosis of Specific Language Impairment. Language, Speech, and Hearing Services in Schools, 44, 133-146.

- Hasbrouck, J. & Tindal, G. A. (2006). Oral reading fluency norms: A valuable assessment tool for reading teachers. The Reading Teacher. 59(7), 636-644.).

- Norbury, C. F., Gemmell, T., & Paul, R. (2014). Pragmatics abilities in narrative production: a cross-disorder comparison. Journal of child language, 41(03), 485-510.

- Peña, E.D., Spaulding, T.J., & Plante, E. (2006). The Composition of Normative Groups and Diagnostic Decision Making: Shooting Ourselves in the Foot. American Journal of Speech-Language Pathology, 15, 247-254.

- Spaulding, Plante & Farinella (2006) Eligibility Criteria for Language Impairment: Is the Low End of Normal Always Appropriate? Language, Speech, and Hearing Services in Schools, 37, 61-72.

- Spaulding, Szulga, & Figueria (2012) Using Norm-Referenced Tests to Determine Severity of Language Impairment in Children: Disconnect Between U.S. Policy Makers and Test Developers. Journal of Speech, Language and Hearing Research. 43, 176-190.

Making Our Interventions Count or What’s Research Got To Do With It?

Two years ago I wrote a blog post entitled: “What’s Memes Got To Do With It?” which summarized key points of Dr. Alan G. Kamhi’s 2004 article: “A Meme’s Eye View of Speech-Language Pathology“. It delved into answering the following question: “Why do some terms, labels, ideas, and constructs [in our field] prevail whereas others fail to gain acceptance?”.

Two years ago I wrote a blog post entitled: “What’s Memes Got To Do With It?” which summarized key points of Dr. Alan G. Kamhi’s 2004 article: “A Meme’s Eye View of Speech-Language Pathology“. It delved into answering the following question: “Why do some terms, labels, ideas, and constructs [in our field] prevail whereas others fail to gain acceptance?”.

Today I would like to reference another article by Dr. Kamhi written in 2014, entitled “Improving Clinical Practices for Children With Language and Learning Disorders“.

This article was written to address the gaps between research and clinical practice with respect to the implementation of EBP for intervention purposes.

Dr. Kamhi begins the article by posing 10 True or False questions for his readers:

- Learning is easier than generalization.

- Instruction that is constant and predictable is more effective than instruction that varies the conditions of learning and practice.

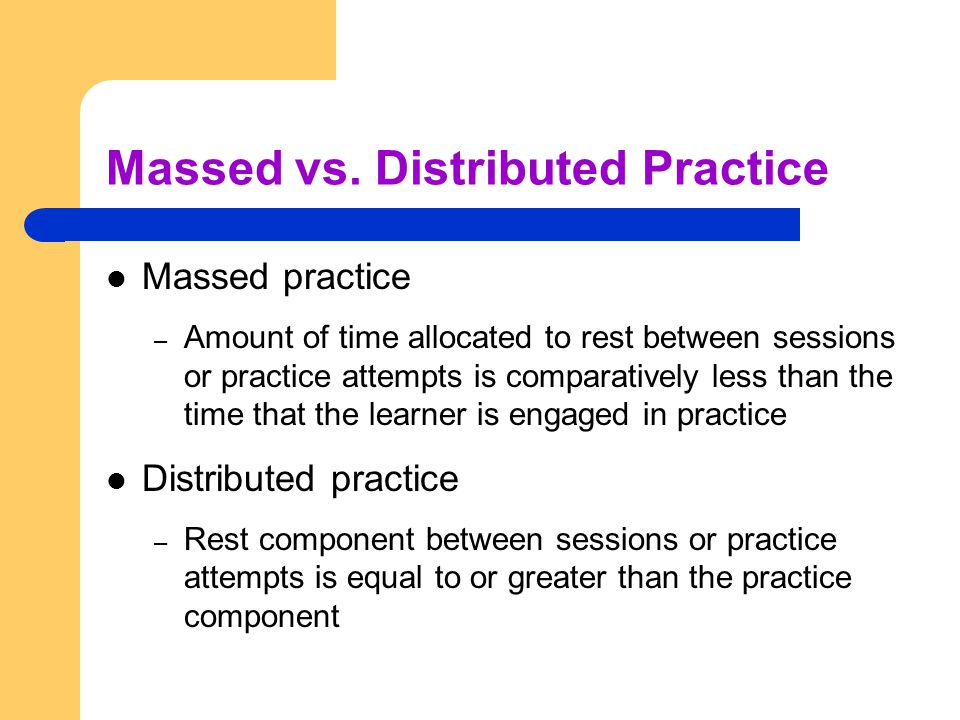

- Focused stimulation (massed practice) is a more effective teaching strategy than varied stimulation (distributed practice).

- The more feedback, the better.

- Repeated reading of passages is the best way to learn text information.

- More therapy is always better.

- The most effective language and literacy interventions target processing limitations rather than knowledge deficits.

- Telegraphic utterances (e.g., push ball, mommy sock) should not be provided as input for children with limited language.

- Appropriate language goals include increasing levels of mean length of utterance (MLU) and targeting Brown’s (1973) 14 grammatical morphemes.

- Sequencing is an important skill for narrative competence.

Guess what? Only statement 8 of the above quiz is True! Every other statement from the above is FALSE!

Now, let’s talk about why that is!

First up is the concept of learning vs. generalization. Here Dr. Kamhi discusses that some clinicians still possess an “outdated behavioral view of learning” in our field, which is not theoretically and clinically useful. He explains that when we are talking about generalization – what children truly have a difficulty with is “transferring narrow limited rules to new situations“. “Children with language and learning problems will have difficulty acquiring broad-based rules and modifying these rules once acquired, and they also will be more vulnerable to performance demands on speech production and comprehension (Kamhi, 1988)” (93). After all, it is not “reasonable to expect children to use language targets consistently after a brief period of intervention” and while we hope that “language intervention [is] designed to lead children with language disorders to acquire broad-based language rules” it is a hugely difficult task to undertake and execute.

First up is the concept of learning vs. generalization. Here Dr. Kamhi discusses that some clinicians still possess an “outdated behavioral view of learning” in our field, which is not theoretically and clinically useful. He explains that when we are talking about generalization – what children truly have a difficulty with is “transferring narrow limited rules to new situations“. “Children with language and learning problems will have difficulty acquiring broad-based rules and modifying these rules once acquired, and they also will be more vulnerable to performance demands on speech production and comprehension (Kamhi, 1988)” (93). After all, it is not “reasonable to expect children to use language targets consistently after a brief period of intervention” and while we hope that “language intervention [is] designed to lead children with language disorders to acquire broad-based language rules” it is a hugely difficult task to undertake and execute.

Next, Dr. Kamhi addresses the issue of instructional factors, specifically the importance of “varying conditions of instruction and practice“. Here, he addresses the fact that while contextualized instruction is highly beneficial to learners unless we inject variability and modify various aspects of instruction including context, composition, duration, etc., we ran the risk of limiting our students’ long-term outcomes.

After that, Dr. Kamhi addresses the concept of distributed practice (spacing of intervention) and how important it is for teaching children with language disorders. He points out that a number of recent studies have found that “spacing and distribution of teaching episodes have more of an impact on treatment outcomes than treatment intensity” (94).

After that, Dr. Kamhi addresses the concept of distributed practice (spacing of intervention) and how important it is for teaching children with language disorders. He points out that a number of recent studies have found that “spacing and distribution of teaching episodes have more of an impact on treatment outcomes than treatment intensity” (94).

He also advocates reducing evaluative feedback to learners to “enhance long-term retention and generalization of motor skills“. While he cites research from studies pertaining to speech production, he adds that language learning could also benefit from this practice as it would reduce conversational disruptions and tunning out on the part of the student.

From there he addresses the limitations of repetition for specific tasks (e.g., text rereading). He emphasizes how important it is for students to recall and retrieve text rather than repeatedly reread it (even without correction), as the latter results in a lack of comprehension/retention of read information.

After that, he discusses treatment intensity. Here he emphasizes the fact that higher dose of instruction will not necessarily result in better therapy outcomes due to the research on the effects of “learning plateaus and threshold effects in language and literacy” (95). We have seen research on this with respect to joint book reading, vocabulary words exposure, etc. As such, at a certain point in time increased intensity may actually result in decreased treatment benefits.

His next point against processing interventions is very near and dear to my heart. Those of you familiar with my blog know that I have devoted a substantial number of posts pertaining to the lack of validity of CAPD diagnosis (as a standalone entity) and urged clinicians to provide language based vs. specific auditory interventions which lack treatment utility. Here, Dr. Kamhi makes a great point that: “Interventions that target processing skills are particularly appealing because they offer the promise of improving language and learning deficits without having to directly target the specific knowledge and skills required to be a proficient speaker, listener, reader, and writer.” (95) The problem is that we have numerous studies on the topic of improvement of isolated skills (e.g., auditory skills, working memory, slow processing, etc.) which clearly indicate lack of effectiveness of these interventions. As such, “practitioners should be highly skeptical of interventions that promise quick fixes for language and learning disabilities” (96).

His next point against processing interventions is very near and dear to my heart. Those of you familiar with my blog know that I have devoted a substantial number of posts pertaining to the lack of validity of CAPD diagnosis (as a standalone entity) and urged clinicians to provide language based vs. specific auditory interventions which lack treatment utility. Here, Dr. Kamhi makes a great point that: “Interventions that target processing skills are particularly appealing because they offer the promise of improving language and learning deficits without having to directly target the specific knowledge and skills required to be a proficient speaker, listener, reader, and writer.” (95) The problem is that we have numerous studies on the topic of improvement of isolated skills (e.g., auditory skills, working memory, slow processing, etc.) which clearly indicate lack of effectiveness of these interventions. As such, “practitioners should be highly skeptical of interventions that promise quick fixes for language and learning disabilities” (96).

Now let us move on to language and particularly the models we provide to our clients to encourage greater verbal output. Research indicates that when clinicians are attempting to expand children’s utterances, they need to provide well-formed language models. Studies show that children select strong input when its surrounded by weaker input (the surrounding weaker syllables make stronger syllables stand out). As such, clinicians should expand upon/comment on what clients are saying with grammatically complete models vs. telegraphic productions.

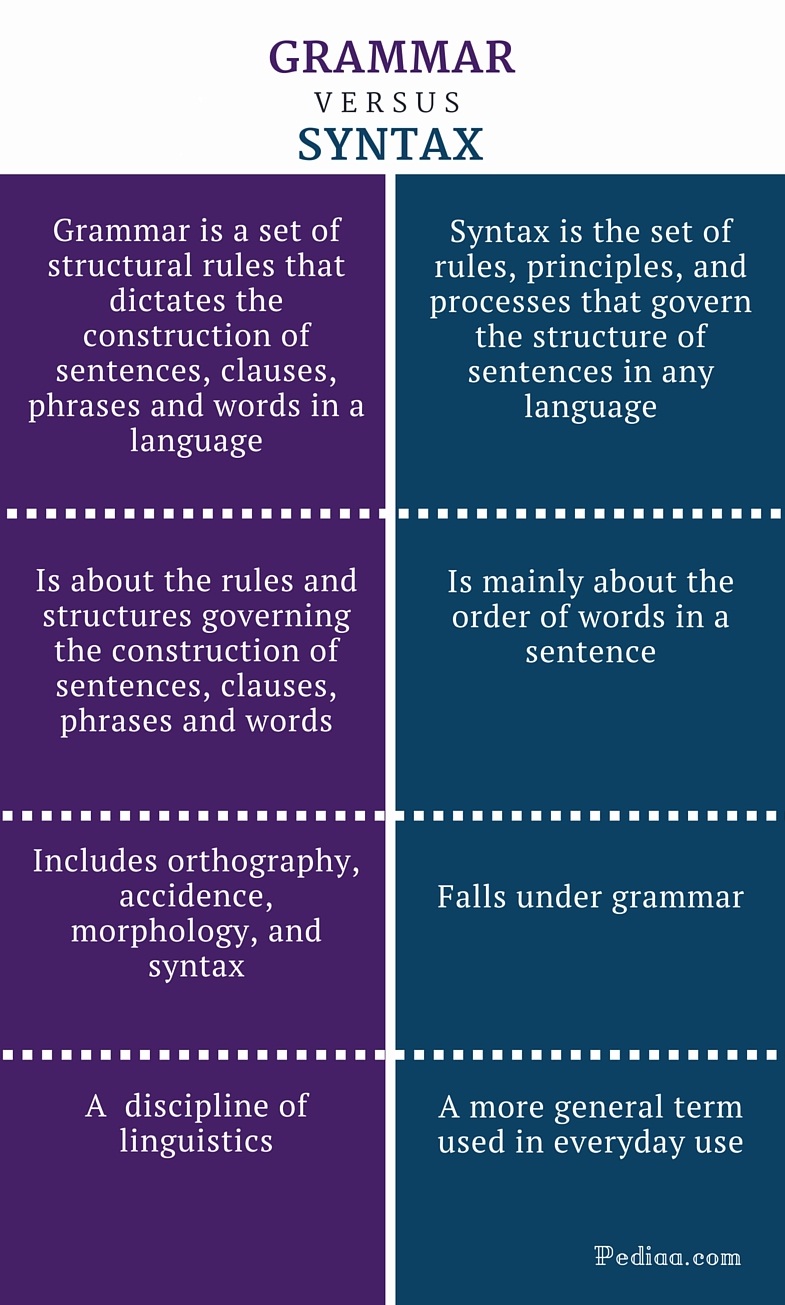

From there lets us take a look at Dr. Kamhi’s recommendations for grammar and syntax. Grammatical development goes much further than addressing Brown’s morphemes in therapy and calling it a day. As such, it is important to understand that children with developmental language disorders (DLD) (#DevLang) do not have difficulty acquiring all morphemes. Rather studies have shown that they have difficulty learning grammatical morphemes that reflect tense and agreement (e.g., third-person singular, past tense, auxiliaries, copulas, etc.). As such, use of measures developed by Hadley & Holt, 2006; Hadley & Short, 2005 (e.g., Tense Marker Total & Productivity Score) can yield helpful information regarding which grammatical structures to target in therapy.

From there lets us take a look at Dr. Kamhi’s recommendations for grammar and syntax. Grammatical development goes much further than addressing Brown’s morphemes in therapy and calling it a day. As such, it is important to understand that children with developmental language disorders (DLD) (#DevLang) do not have difficulty acquiring all morphemes. Rather studies have shown that they have difficulty learning grammatical morphemes that reflect tense and agreement (e.g., third-person singular, past tense, auxiliaries, copulas, etc.). As such, use of measures developed by Hadley & Holt, 2006; Hadley & Short, 2005 (e.g., Tense Marker Total & Productivity Score) can yield helpful information regarding which grammatical structures to target in therapy.

With respect to syntax, Dr. Kamhi notes that many clinicians erroneously believe that complex syntax should be targeted when children are much older. The Common Core State Standards do not help this cause further, since according to the CCSS complex syntax should be targeted 2-3 grades, which is far too late. Typically developing children begin developing complex syntax around 2 years of age and begin readily producing it around 3 years of age. As such, clinicians should begin targeting complex syntax in preschool years and not wait until the children have mastered all morphemes and clauses (97)

Finally, Dr. Kamhi wraps up his article by offering suggestions regarding prioritizing intervention goals. Here, he explains that goal prioritization is affected by

- clinician experience and competencies

- the degree of collaboration with other professionals

- type of service delivery model

- client/student factors

He provides a hypothetical case scenario in which the teaching responsibilities are divvied up between three professionals, with SLP in charge of targeting narrative discourse. Here, he explains that targeting narratives does not involve targeting sequencing abilities. “The ability to understand and recall events in a story or script depends on conceptual understanding of the topic and attentional/memory abilities, not sequencing ability.” He emphasizes that sequencing is not a distinct cognitive process that requires isolated treatment. Yet many SLPs “continue to believe that sequencing is a distinct processing skill that needs to be assessed and treated.” (99)

Dr. Kamhi supports the above point by providing an example of two passages. One, which describes a random order of events, and another which follows a logical order of events. He then points out that the randomly ordered story relies exclusively on attention and memory in terms of “sequencing”, while the second story reduces demands on memory due to its logical flow of events. As such, he points out that retelling deficits seemingly related to sequencing, tend to be actually due to “limitations in attention, working memory, and/or conceptual knowledge“. Hence, instead of targeting sequencing abilities in therapy, SLPs should instead use contextualized language intervention to target aspects of narrative development (macro and microstructural elements).

Dr. Kamhi supports the above point by providing an example of two passages. One, which describes a random order of events, and another which follows a logical order of events. He then points out that the randomly ordered story relies exclusively on attention and memory in terms of “sequencing”, while the second story reduces demands on memory due to its logical flow of events. As such, he points out that retelling deficits seemingly related to sequencing, tend to be actually due to “limitations in attention, working memory, and/or conceptual knowledge“. Hence, instead of targeting sequencing abilities in therapy, SLPs should instead use contextualized language intervention to target aspects of narrative development (macro and microstructural elements).

Furthermore, here it is also important to note that the “sequencing fallacy” affects more than just narratives. It is very prevalent in the intervention process in the form of the ubiquitous “following directions” goal/s. Many clinicians readily create this goal for their clients due to their belief that it will result in functional therapeutic language gains. However, when one really begins to deconstruct this goal, one will realize that it involves a number of discrete abilities including: memory, attention, concept knowledge, inferencing, etc. Consequently, targeting the above goal will not result in any functional gains for the students (their memory abilities will not magically improve as a result of it). Instead, targeting specific language and conceptual goals (e.g., answering questions, producing complex sentences, etc.) and increasing the students’ overall listening comprehension and verbal expression will result in improvements in the areas of attention, memory, and processing, including their ability to follow complex directions.

There you have it! Ten practical suggestions from Dr. Kamhi ready for immediate implementation! And for more information, I highly recommend reading the other articles in the same clinical forum, all of which possess highly practical and relevant ideas for therapeutic implementation. They include:

There you have it! Ten practical suggestions from Dr. Kamhi ready for immediate implementation! And for more information, I highly recommend reading the other articles in the same clinical forum, all of which possess highly practical and relevant ideas for therapeutic implementation. They include:

- Clinical Scientists Improving Clinical Practices: In Thoughts and Actions

- Approaching Early Grammatical Intervention From a Sentence-Focused Framework

- What Works in Therapy: Further Thoughts on Improving Clinical Practice for Children With Language Disorders

- Improving Clinical Practice: A School-Age and School-Based Perspective

- Improving Clinical Services: Be Aware of Fuzzy Connections Between Principles and Strategies

- One Size Does Not Fit All: Improving Clinical Practice in Older Children and Adolescents With Language and Learning Disorders

- Language Intervention at the Middle School: Complex Talk Reflects Complex Thought

- Using Our Knowledge of Typical Language Development

References:

Kamhi, A. (2014). Improving clinical practices for children with language and learning disorders. Language, Speech, and Hearing Services in Schools, 45(2), 92-103

Helpful Social Media Resources:

New Products for the 2017 Academic School Year for SLPs

September is quickly approaching and school-based speech language pathologists (SLPs) are preparing to go back to work. Many of them are looking to update their arsenal of speech and language materials for the upcoming academic school year.

With that in mind, I wanted to update my readers regarding all the new products I have recently created with a focus on assessment and treatment in speech language pathology. Continue reading New Products for the 2017 Academic School Year for SLPs

Treatment of Children with “APD”: What SLPs Need to Know

In recent years there has been an increase in research on the subject of diagnosis and treatment of Auditory Processing Disorders (APD), formerly known as Central Auditory Processing Disorders or CAPD.

In recent years there has been an increase in research on the subject of diagnosis and treatment of Auditory Processing Disorders (APD), formerly known as Central Auditory Processing Disorders or CAPD.

More and more studies in the fields of audiology and speech-language pathology began confirming the lack of validity of APD as a standalone (or useful) diagnosis. To illustrate, in June 2015, the American Journal of Audiology published an article by David DeBonis entitled: “It Is Time to Rethink Central Auditory Processing Disorder Protocols for School-Aged Children.” In this article, DeBonis pointed out numerous inconsistencies involved in APD testing and concluded that “routine use of APD test protocols cannot be supported” and that [APD] “intervention needs to be contextualized and functional” (DeBonis, 2015, p. 124) Continue reading Treatment of Children with “APD”: What SLPs Need to Know

APD Update: New Developments on an Old Controversy

In the past two years, I wrote a series of research-based posts (HERE and HERE) regarding the validity of (Central) Auditory Processing Disorder (C/APD) as a standalone diagnosis as well as questioned the utility of it for classification purposes in the school setting.

In the past two years, I wrote a series of research-based posts (HERE and HERE) regarding the validity of (Central) Auditory Processing Disorder (C/APD) as a standalone diagnosis as well as questioned the utility of it for classification purposes in the school setting.

Once again I want to reiterate that I was in no way disputing the legitimate symptoms (e.g., difficulty processing language, difficulty organizing narratives, difficulty decoding text, etc.), which the students diagnosed with “CAPD” were presenting with.

Rather, I was citing research to indicate that these symptoms were indicative of broader linguistic-based deficits, which required targeted linguistic/literacy-based interventions rather than recommendations for specific prescriptive programs (e.g., CAPDOTS, Fast ForWord, etc.), or mere accommodations.

I was also significantly concerned that overfocus on the diagnosis of (C)APD tended to obscure REAL, language-based deficits in children and forced SLPs to address erroneous therapeutic targets based on AuD recommendations or restricted them to a receipt of mere accommodations rather than rightful therapeutic remediation. Continue reading APD Update: New Developments on an Old Controversy

New Product Giveaway: Comprehensive Literacy Checklist For School-Aged Children

I wanted to start the new year right by giving away a few copies of a new checklist I recently created entitled: “Comprehensive Literacy Checklist For School-Aged Children“.

I wanted to start the new year right by giving away a few copies of a new checklist I recently created entitled: “Comprehensive Literacy Checklist For School-Aged Children“.

It was created to assist Speech Language Pathologists (SLPs) in the decision-making process of how to identify deficit areas and select assessment instruments to prioritize a literacy assessment for school aged children.

The goal is to eliminate administration of unnecessary or irrelevant tests and focus on the administration of instruments directly targeting the specific areas of difficulty that the student presents with.

*For the purpose of this product, the term “literacy checklist” rather than “dyslexia checklist” is used throughout this document to refer to any deficits in the areas of reading, writing, and spelling that the child may present with in order to identify any possible difficulties the child may present with, in the areas of literacy as well as language.

This checklist can be used for multiple purposes.

1. To identify areas of deficits the child presents with for targeted assessment purposes

2. To highlight areas of strengths (rather than deficits only) the child presents with pre or post intervention

3. To highlight residual deficits for intervention purpose in children already receiving therapy services without further reassessment

Checklist Contents:

- Page 1 Title

- Page 2 Directions

- Pages 3-9 Checklist

- Page 10 Select Tests of Reading, Spelling, and Writing for School-Aged Children

- Pages 11-12 Helpful Smart Speech Therapy Materials

Checklist Areas:

- AT RISK FAMILY HISTORY

- AT RISK DEVELOPMENTAL HISTORY

- BEHAVIORAL MANIFESTATIONS

- LEARNING DEFICITS

- Memory for Sequences

- Vocabulary Knowledge

- Narrative Production

- Phonological Awareness

- Phonics

- Morphological Awareness

- Reading Fluency

- Reading Comprehension

- Spelling

- Writing Conventions

- Writing Composition

- Handwriting

You can find this product in my online store HERE.

Would you like to check it out in action? I’ll be giving away two copies of the checklist in a Rafflecopter Giveaway to two winners. So enter today to win your own copy!

Review of the Test of Integrated Language and Literacy (TILLS)

The Test of Integrated Language & Literacy Skills (TILLS) is an assessment of oral and written language abilities in students 6–18 years of age. Published in the Fall 2015, it is unique in the way that it is aimed to thoroughly assess skills such as reading fluency, reading comprehension, phonological awareness, spelling, as well as writing in school age children. As I have been using this test since the time it was published, I wanted to take an opportunity today to share just a few of my impressions of this assessment.

The Test of Integrated Language & Literacy Skills (TILLS) is an assessment of oral and written language abilities in students 6–18 years of age. Published in the Fall 2015, it is unique in the way that it is aimed to thoroughly assess skills such as reading fluency, reading comprehension, phonological awareness, spelling, as well as writing in school age children. As I have been using this test since the time it was published, I wanted to take an opportunity today to share just a few of my impressions of this assessment.

First, a little background on why I chose to purchase this test so shortly after I had purchased the Clinical Evaluation of Language Fundamentals – 5 (CELF-5). Soon after I started using the CELF-5 I noticed that it tended to considerably overinflate my students’ scores on a variety of its subtests. In fact, I noticed that unless a student had a fairly severe degree of impairment, the majority of his/her scores came out either low/slightly below average (click for more info on why this was happening HERE, HERE, or HERE). Consequently, I was excited to hear regarding TILLS development, almost simultaneously through ASHA as well as SPELL-Links ListServe. I was particularly happy because I knew some of this test’s developers (e.g., Dr. Elena Plante, Dr. Nickola Nelson) have published solid research in the areas of psychometrics and literacy respectively.

According to the TILLS developers it has been standardized for 3 purposes:

- to identify language and literacy disorders

- to document patterns of relative strengths and weaknesses

- to track changes in language and literacy skills over time

The testing subtests can be administered in isolation (with the exception of a few) or in its entirety. The administration of all the 15 subtests may take approximately an hour and a half, while the administration of the core subtests typically takes ~45 mins).

Please note that there are 5 subtests that should not be administered to students 6;0-6;5 years of age because many typically developing students are still mastering the required skills.

- Subtest 5 – Nonword Spelling

- Subtest 7 – Reading Comprehension

- Subtest 10 – Nonword Reading

- Subtest 11 – Reading Fluency

- Subtest 12 – Written Expression

However, if needed, there are several tests of early reading and writing abilities which are available for assessment of children under 6:5 years of age with suspected literacy deficits (e.g., TERA-3: Test of Early Reading Ability–Third Edition; Test of Early Written Language, Third Edition-TEWL-3, etc.).

Let’s move on to take a deeper look at its subtests. Please note that for the purposes of this review all images came directly from and are the property of Brookes Publishing Co (clicking on each of the below images will take you directly to their source).

1. Vocabulary Awareness (VA) (description above) requires students to display considerable linguistic and cognitive flexibility in order to earn an average score. It works great in teasing out students with weak vocabulary knowledge and use, as well as students who are unable to quickly and effectively analyze words for deeper meaning and come up with effective definitions of all possible word associations. Be mindful of the fact that even though the words are presented to the students in written format in the stimulus book, the examiner is still expected to read all the words to the students. Consequently, students with good vocabulary knowledge and strong oral language abilities can still pass this subtest despite the presence of significant reading weaknesses. Recommendation: I suggest informally checking the student’s word reading abilities by asking them to read of all the words, before reading all the word choices to them. This way you can informally document any word misreadings made by the student even in the presence of an average subtest score.

1. Vocabulary Awareness (VA) (description above) requires students to display considerable linguistic and cognitive flexibility in order to earn an average score. It works great in teasing out students with weak vocabulary knowledge and use, as well as students who are unable to quickly and effectively analyze words for deeper meaning and come up with effective definitions of all possible word associations. Be mindful of the fact that even though the words are presented to the students in written format in the stimulus book, the examiner is still expected to read all the words to the students. Consequently, students with good vocabulary knowledge and strong oral language abilities can still pass this subtest despite the presence of significant reading weaknesses. Recommendation: I suggest informally checking the student’s word reading abilities by asking them to read of all the words, before reading all the word choices to them. This way you can informally document any word misreadings made by the student even in the presence of an average subtest score.

2. The Phonemic Awareness (PA) subtest (description above) requires students to isolate and delete initial sounds in words of increasing complexity. While this subtest does not require sound isolation and deletion in various word positions, similar to tests such as the CTOPP-2: Comprehensive Test of Phonological Processing–Second Edition or the The Phonological Awareness Test 2 (PAT 2), it is still a highly useful and reliable measure of phonemic awareness (as one of many precursors to reading fluency success). This is especially because after the initial directions are given, the student is expected to remember to isolate the initial sounds in words without any prompting from the examiner. Thus, this task also indirectly tests the students’ executive function abilities in addition to their phonemic awareness skills.

3. The Story Retelling (SR) subtest (description above) requires students to do just that retell a story. Be mindful of the fact that the presented stories have reduced complexity. Thus, unless the students possess significant retelling deficits, the above subtest may not capture their true retelling abilities. Recommendation: Consider supplementing this subtest with informal narrative measures. For younger children (kindergarten and first grade) I recommend using wordless picture books to perform a dynamic assessment of their retelling abilities following a clinician’s narrative model (e.g., HERE). For early elementary aged children (grades 2 and up), I recommend using picture books, which are first read to and then retold by the students with the benefit of pictorial but not written support. Finally, for upper elementary aged children (grades 4 and up), it may be helpful for the students to retell a book or a movie seen recently (or liked significantly) by them without the benefit of visual support all together (e.g., HERE).

4. The Nonword Repetition (NR) subtest (description above) requires students to repeat nonsense words of increasing length and complexity. Weaknesses in the area of nonword repetition have consistently been associated with language impairments and learning disabilities due to the task’s heavy reliance on phonological segmentation as well as phonological and lexical knowledge (Leclercq, Maillart, Majerus, 2013). Thus, both monolingual and simultaneously bilingual children with language and literacy impairments will be observed to present with patterns of segment substitutions (subtle substitutions of sounds and syllables in presented nonsense words) as well as segment deletions of nonword sequences more than 2-3 or 3-4 syllables in length (depending on the child’s age).

5. The Nonword Spelling (NS) subtest (description above) requires the students to spell nonwords from the Nonword Repetition (NR) subtest. Consequently, the Nonword Repetition (NR) subtest needs to be administered prior to the administration of this subtest in the same assessment session. In contrast to the real-word spelling tasks, students cannot memorize the spelling of the presented words, which are still bound by orthographic and phonotactic constraints of the English language. While this is a highly useful subtest, is important to note that simultaneously bilingual children may present with decreased scores due to vowel errors. Consequently, it is important to analyze subtest results in order to determine whether dialectal differences rather than a presence of an actual disorder is responsible for the error patterns.

6. The Listening Comprehension (LC) subtest (description above) requires the students to listen to short stories and then definitively answer story questions via available answer choices, which include: “Yes”, “No’, and “Maybe”. This subtest also indirectly measures the students’ metalinguistic awareness skills as they are needed to detect when the text does not provide sufficient information to answer a particular question definitively (e.g., “Maybe” response may be called for). Be mindful of the fact that because the students are not expected to provide sentential responses to questions it may be important to supplement subtest administration with another listening comprehension assessment. Tests such as the Listening Comprehension Test-2 (LCT-2), the Listening Comprehension Test-Adolescent (LCT-A), or the Executive Function Test-Elementary (EFT-E) may be useful if language processing and listening comprehension deficits are suspected or reported by parents or teachers. This is particularly important to do with students who may be ‘good guessers’ but who are also reported to present with word-finding difficulties at sentence and discourse levels.

7. The Reading Comprehension (RC) subtest (description above) requires the students to read short story and answer story questions in “Yes”, “No’, and “Maybe” format. This subtest is not stand alone and must be administered immediately following the administration the Listening Comprehension subtest. The student is asked to read the first story out loud in order to determine whether s/he can proceed with taking this subtest or discontinue due to being an emergent reader. The criterion for administration of the subtest is making 7 errors during the reading of the first story and its accompanying questions. Unfortunately, in my clinical experience this subtest is not always accurate at identifying children with reading-based deficits.

While I find it terrific for students with severe-profound reading deficits and/or below average IQ, a number of my students with average IQ and moderately impaired reading skills managed to pass it via a combination of guessing and luck despite being observed to misread aloud between 40-60% of the presented words. Be mindful of the fact that typically such students may have up to 5-6 errors during the reading of the first story. Thus, according to administration guidelines these students will be allowed to proceed and take this subtest. They will then continue to make text misreadings during each story presentation (you will know that by asking them to read each story aloud vs. silently). However, because the response mode is in definitive (“Yes”, “No’, and “Maybe”) vs. open ended question format, a number of these students will earn average scores by being successful guessers. Recommendation: I highly recommend supplementing the administration of this subtest with grade level (or below grade level) texts (see HERE and/or HERE), to assess the student’s reading comprehension informally.

I present a full one page text to the students and ask them to read it to me in its entirety. I audio/video record the student’s reading for further analysis (see Reading Fluency section below). After the completion of the story I ask the student questions with a focus on main idea comprehension and vocabulary definitions. I also ask questions pertaining to story details. Depending on the student’s age I may ask them abstract/ factual text questions with and without text access. Overall, I find that informal administration of grade level (or even below grade-level) texts coupled with the administration of standardized reading tests provides me with a significantly better understanding of the student’s reading comprehension abilities rather than administration of standardized reading tests alone.

8. The Following Directions (FD) subtest (description above) measures the student’s ability to execute directions of increasing length and complexity. It measures the student’s short-term, immediate and working memory, as well as their language comprehension. What is interesting about the administration of this subtest is that the graphic symbols (e.g., objects, shapes, letter and numbers etc.) the student is asked to modify remain covered as the instructions are given (to prevent visual rehearsal). After being presented with the oral instruction the students are expected to move the card covering the stimuli and then to executive the visual-spatial, directional, sequential, and logical if–then the instructions by marking them on the response form. The fact that the visual stimuli remains covered until the last moment increases the demands on the student’s memory and comprehension. The subtest was created to simulate teacher’s use of procedural language (giving directions) in classroom setting (as per developers).

9. The Delayed Story Retelling (DSR) subtest (description above) needs to be administered to the students during the same session as the Story Retelling (SR) subtest, approximately 20 minutes after the SR subtest administration. Despite the relatively short passage of time between both subtests, it is considered to be a measure of long-term memory as related to narrative retelling of reduced complexity. Here, the examiner can compare student’s performance to determine whether the student did better or worse on either of these measures (e.g., recalled more information after a period of time passed vs. immediately after being read the story). However, as mentioned previously, some students may recall this previously presented story fairly accurately and as a result may obtain an average score despite a history of teacher/parent reported long-term memory limitations. Consequently, it may be important for the examiner to supplement the administration of this subtest with a recall of a movie/book recently seen/read by the student (a few days ago) in order to compare both performances and note any weaknesses/limitations.

10. The Nonword Reading (NR) subtest (description above) requires students to decode nonsense words of increasing length and complexity. What I love about this subtest is that the students are unable to effectively guess words (as many tend to routinely do when presented with real words). Consequently, the presentation of this subtest will tease out which students have good letter/sound correspondence abilities as well as solid orthographic, morphological and phonological awareness skills and which ones only memorized sight words and are now having difficulty decoding unfamiliar words as a result.

11. The Reading Fluency (RF) subtest (description above) requires students to efficiently read facts which make up simple stories fluently and correctly. Here are the key to attaining an average score is accuracy and automaticity. In contrast to the previous subtest, the words are now presented in meaningful simple syntactic contexts.

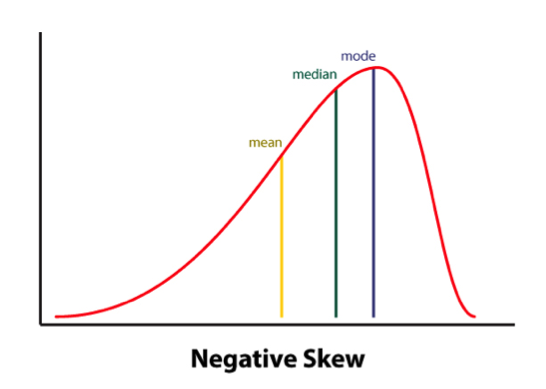

It is important to note that the Reading Fluency subtest of the TILLS has a negatively skewed distribution. As per authors, “a large number of typically developing students do extremely well on this subtest and a much smaller number of students do quite poorly.”

Thus, “the mean is to the left of the mode” (see publisher’s image below). This is why a student could earn an average standard score (near the mean) and a low percentile rank when true percentiles are used rather than NCE percentiles (Normal Curve Equivalent).

Consequently under certain conditions (See HERE) the percentile rank (vs. the NCE percentile) will be a more accurate representation of the student’s ability on this subtest.

Indeed, due to the reduced complexity of the presented words some students (especially younger elementary aged) may obtain average scores and still present with serious reading fluency deficits.

I frequently see that in students with average IQ and go to long-term memory, who by second and third grades have managed to memorize an admirable number of sight words due to which their deficits in the areas of reading appeared to be minimized. Recommendation: If you suspect that your student belongs to the above category I highly recommend supplementing this subtest with an informal measure of reading fluency. This can be done by presenting to the student a grade level text (I find science and social studies texts particularly useful for this purpose) and asking them to read several paragraphs from it (see HERE and/or HERE).

As the students are reading I calculate their reading fluency by counting the number of words they read per minute. I find it very useful as it allows me to better understand their reading profile (e.g, fast/inaccurate reader, slow/inaccurate reader, slow accurate reader, fast/accurate reader). As the student is reading I note their pauses, misreadings, word-attack skills and the like. Then, I write a summary comparing the students reading fluency on both standardized and informal assessment measures in order to document students strengths and limitations.

12. The Written Expression (WE) subtest (description above) needs to be administered to the students immediately after the administration of the Reading Fluency (RF) subtest because the student is expected to integrate a series of facts presented in the RF subtest into their writing sample. There are 4 stories in total for the 4 different age groups.

The examiner needs to show the student a different story which integrates simple facts into a coherent narrative. After the examiner reads that simple story to the students s/he is expected to tell the students that the story is okay, but “sounds kind of “choppy.” They then need to show the student an example of how they could put the facts together in a way that sounds more interesting and less choppy by combining sentences (see below). Finally, the examiner will ask the students to rewrite the story presented to them in a similar manner (e.g, “less choppy and more interesting.”)

After the student finishes his/her story, the examiner will analyze it and generate the following scores: a discourse score, a sentence score, and a word score. Detailed instructions as well as the Examiner’s Practice Workbook are provided to assist with scoring as it takes a bit of training as well as trial and error to complete it, especially if the examiners are not familiar with certain procedures (e.g., calculating T-units).

Full disclosure: Because the above subtest is still essentially sentence combining, I have only used this subtest a handful of times with my students. Typically when I’ve used it in the past, most of my students fell in two categories: those who failed it completely by either copying text word for word, failing to generate any written output etc. or those who passed it with flying colors but still presented with notable written output deficits. Consequently, I’ve replaced Written Expression subtest administration with the administration of written standardized tests, which I supplement with an informal grade level expository, persuasive, or narrative writing samples.

Having said that many clinicians may not have the access to other standardized written assessments, or lack the time to administer entire standardized written measures (which may frequently take between 60 to 90 minutes of administration time). Consequently, in the absence of other standardized writing assessments, this subtest can be effectively used to gauge the student’s basic writing abilities, and if needed effectively supplemented by informal writing measures (mentioned above).

13. The Social Communication (SC) subtest (description above) assesses the students’ ability to understand vocabulary associated with communicative intentions in social situations. It requires students to comprehend how people with certain characteristics might respond in social situations by formulating responses which fit the social contexts of those situations. Essentially students become actors who need to act out particular scenes while viewing select words presented to them.

Full disclosure: Similar to my infrequent administration of the Written Expression subtest, I have also administered this subtest very infrequently to students. Here is why.

I am an SLP who works full-time in a psychiatric hospital with children diagnosed with significant psychiatric impairments and concomitant language and literacy deficits. As a result, a significant portion of my job involves comprehensive social communication assessments to catalog my students’ significant deficits in this area. Yet, past administration of this subtest showed me that number of my students can pass this subtest quite easily despite presenting with notable and easily evidenced social communication deficits. Consequently, I prefer the administration of comprehensive social communication testing when working with children in my hospital based program or in my private practice, where I perform independent comprehensive evaluations of language and literacy (IEEs).

Again, as I’ve previously mentioned many clinicians may not have the access to other standardized social communication assessments, or lack the time to administer entire standardized written measures. Consequently, in the absence of other social communication assessments, this subtest can be used to get a baseline of the student’s basic social communication abilities, and then be supplemented with informal social communication measures such as the Informal Social Thinking Dynamic Assessment Protocol (ISTDAP) or observational social pragmatic checklists.

14. The Digit Span Forward (DSF) subtest (description above) is a relatively isolated measure of short term and verbal working memory ( it minimizes demands on other aspects of language such as syntax or vocabulary).

15. The Digit Span Backward (DSB) subtest (description above) assesses the student’s working memory and requires the student to mentally manipulate the presented stimuli in reverse order. It allows examiner to observe the strategies (e.g. verbal rehearsal, visual imagery, etc.) the students are using to aid themselves in the process. Please note that the Digit Span Forward subtest must be administered immediately before the administration of this subtest.

SLPs who have used tests such as the Clinical Evaluation of Language Fundamentals – 5 (CELF-5) or the Test of Auditory Processing Skills – Third Edition (TAPS-3) should be highly familiar with both subtests as they are fairly standard measures of certain aspects of memory across the board.

To continue, in addition to the presence of subtests which assess the students literacy abilities, the TILLS also possesses a number of interesting features.

For starters, the TILLS Easy Score, which allows the examiners to use their scoring online. It is incredibly easy and effective. After clicking on the link and filling out the preliminary demographic information, all the examiner needs to do is to plug in this subtest raw scores, the system does the rest. After the raw scores are plugged in, the system will generate a PDF document with all the data which includes (but is not limited to) standard scores, percentile ranks, as well as a variety of composite and core scores. The examiner can then save the PDF on their device (laptop, PC, tablet etc.) for further analysis.

The there is the quadrant model. According to the TILLS sampler (HERE) “it allows the examiners to assess and compare students’ language-literacy skills at the sound/word level and the sentence/ discourse level across the four oral and written modalities—listening, speaking, reading, and writing” and then create “meaningful pro files of oral and written language skills that will help you understand the strengths and needs of individual students and communicate about them in a meaningful way with teachers, parents, and students. (pg. 21)”

Then there is the Student Language Scale (SLS) which is a one page checklist parents, teachers (and even students) can fill out to informally identify language and literacy based strengths and weaknesses. It allows for meaningful input from multiple sources regarding the students performance (as per IDEA 2004) and can be used not just with TILLS but with other tests or in even isolation (as per developers).

Furthermore according to the developers, because the normative sample included several special needs populations, the TILLS can be used with students diagnosed with ASD, deaf or hard of hearing (see caveat), as well as intellectual disabilities (as long as they are functioning age 6 and above developmentally).

According to the developers the TILLS is aligned with Common Core Standards and can be administered as frequently as two times a year for progress monitoring (min of 6 mos post 1st administration).

With respect to bilingualism examiners can use it with caution with simultaneous English learners but not with sequential English learners (see further explanations HERE). Translations of TILLS are definitely not allowed as they will undermine test validity and reliability.

So there you have it these are just some of my very few impressions regarding this test. Now to some of you may notice that I spend a significant amount of time pointing out some of the tests limitations. However, it is very important to note that we have research that indicates that there is no such thing as a “perfect standardized test” (see HERE for more information). All standardized tests have their limitations.

Having said that, I think that TILLS is a PHENOMENAL addition to the standardized testing market, as it TRULY appears to assess not just language but also literacy abilities of the students on our caseloads.

That’s all from me; however, before signing off I’d like to provide you with more resources and information, which can be reviewed in reference to TILLS. For starters, take a look at Brookes Publishing TILLS resources. These include (but are not limited to) TILLS FAQ, TILLS Easy-Score, TILLS Correction Document, as well as 3 FREE TILLS Webinars. There’s also a Facebook Page dedicated exclusively to TILLS updates (HERE).

But that’s not all. Dr. Nelson and her colleagues have been tirelessly lecturing about the TILLS for a number of years, and many of their past lectures and presentations are available on the ASHA website as well as on the web (e.g., HERE, HERE, HERE, etc). Take a look at them as they contain far more in-depth information regarding the development and implementation of this groundbreaking assessment.

To access TILLS fully-editable template, click HERE

Disclaimer: I did not receive a complimentary copy of this assessment for review nor have I received any encouragement or compensation from either Brookes Publishing or any of the TILLS developers to write it. All images of this test are direct property of Brookes Publishing (when clicked on all the images direct the user to the Brookes Publishing website) and were used in this post for illustrative purposes only.

References:

Leclercq A, Maillart C, Majerus S. (2013) Nonword repetition problems in children with SLI: A deficit in accessing long-term linguistic representations? Topics in Language Disorders. 33 (3) 238-254.

Related Posts:

- Components of Comprehensive Dyslexia Testing: Part I- Introduction and Language Testing

- Part II: Components of Comprehensive Dyslexia Testing – Phonological Awareness and Word Fluency Assessment

- Part III: Components of Comprehensive Dyslexia Testing – Reading Fluency and Reading Comprehension

- Part IV: Components of Comprehensive Dyslexia Testing – Writing and Spelling

- Special Education Disputes and Comprehensive Language Testing: What Parents, Attorneys, and Advocates Need to Know

- Why (C) APD Diagnosis is NOT Valid!

- What Are Speech Pathologists To Do If the (C)APD Diagnosis is NOT Valid?

- What do Auditory Memory Deficits Indicate in the Presence of Average General Language Scores?

- Why Are My Child’s Test Scores Dropping?

- Comprehensive Assessment of Adolescents with Suspected Language and Literacy Disorders